Why Performance Tasks Become Ambiguous (and How to Prevent It)

Performance tasks and projects are powerful because they ask learners to apply knowledge in authentic ways. They also fail easily when directions, evidence, and scoring expectations are fuzzy. Ambiguity usually appears in three places: the task prompt (what to do), the evidence (what to submit), and the rubric (how it will be judged). When any of these are underspecified, students fill gaps with guesses, and teachers end up grading “vibes” instead of observable work. The goal of this chapter is to show how to use AI prompts to remove ambiguity from performance tasks and project rubrics by making requirements explicit, measurable, and aligned to the actual artifacts students produce.

A useful mindset is to treat a performance task like a contract: it must define deliverables, constraints, and evaluation criteria in language that two different teachers could interpret the same way. If you can hand the task to a colleague and they can predict what strong work looks like without asking you questions, you have reduced ambiguity. AI can help you draft that contract, but only if you ask for the right outputs: checklists, boundary conditions, observable indicators, and “non-examples” that clarify what does not meet expectations.

Define the Performance Task as an Evidence Package

To remove ambiguity, start by defining the task as an evidence package rather than a general activity. An evidence package is a set of concrete artifacts that demonstrate learning. For example, “Create a presentation about renewable energy” is an activity; an evidence package might be: (1) a 6-slide deck, (2) a one-page speaker notes document with citations, (3) a data visualization created from a provided dataset, and (4) a short reflection explaining one design decision. When students know exactly what artifacts are required, they can plan and self-check.

When you prompt an AI tool, ask it to output deliverables in a numbered list with format, length, and required components. Also ask for constraints that prevent scope creep (time, tools allowed, number of sources, audience). This is especially important for projects, where students can easily overbuild or underbuild if the boundaries are unclear.

AI prompt: Convert an activity into an evidence package

You are an instructional designer. Convert this project idea into an evidence package with unambiguous deliverables. Project idea: [paste idea]. Output: (1) Deliverables list with format, length, and required components; (2) Constraints (time, tools, collaboration rules, sources); (3) Submission checklist students can use; (4) Teacher quick-check list for completeness. Keep language student-friendly and specific.After you get the output, scan for vague verbs (understand, explore, learn about, discuss) and replace them with observable actions (calculate, compare, justify, annotate, model, revise). If the AI includes “research” as a requirement, specify what counts as research evidence (e.g., annotated bibliography with 3 sources, each summarized in 2–3 sentences, with one direct quote and one paraphrase).

- Listen to the audio with the screen off.

- Earn a certificate upon completion.

- Over 5000 courses for you to explore!

Download the app

Write Task Directions That Are Testable

Directions are unambiguous when they can be tested: a student can point to the work and show where each requirement is met. This means each direction should map to a visible feature in the final artifact. Instead of “Make it engaging,” specify “Include one chart with a title and labeled axes” or “Include two visuals that directly support two different claims.” Instead of “Use good grammar,” specify “No more than 3 sentence-level errors per page; run a spelling and grammar check; read aloud once and revise.”

A practical method is to write directions in two layers: (1) a short overview that explains the purpose and audience, and (2) a requirement list that is purely checkable. The overview motivates; the requirement list prevents confusion. AI can help you generate both layers, but you should insist that the requirement list contains measurable items only.

Step-by-step: Turn vague directions into checkable requirements

- Step 1: List the artifacts students will submit (the evidence package).

- Step 2: For each artifact, list required components (sections, elements, features).

- Step 3: Add minimums and maximums (length, number of examples, number of sources).

- Step 4: Add quality constraints that are observable (citations present, labels included, calculations shown).

- Step 5: Add “not allowed” boundaries (no AI-generated images without attribution, no copied text, no unsupported claims).

- Step 6: Convert each item into a student checklist with yes/no boxes.

AI prompt: Rewrite directions into a measurable checklist

Rewrite the following project directions into a measurable checklist. Every checklist item must be observable in the final submission (yes/no). If a direction is vague, replace it with a measurable requirement and note the change in a separate section called “Clarifications Added.” Directions: [paste directions]. Deliverables: [paste evidence package].Use the “Clarifications Added” section to decide what you truly want to require. If the AI adds something you did not intend (for example, requiring peer review), remove it. The checklist should reflect your actual expectations, not generic project advice.

Design Rubrics That Match the Artifacts (Not the Topic)

Ambiguous rubrics often score the topic rather than the work. For example, “Demonstrates understanding of ecosystems” is hard to score unless you specify what evidence of understanding looks like in the artifact. A rubric becomes unambiguous when each criterion points to a feature of the submitted work and uses descriptors that are observable. Think “Claim is supported by at least two pieces of evidence from the dataset” rather than “Uses evidence well.”

Another common ambiguity is mixing multiple skills into one criterion, such as “Organization and clarity and grammar.” If a student is clear but has grammar errors, how do you score it? Unbundle criteria so each one measures one main construct. If you must combine, define how to weigh subparts (e.g., clarity 70%, grammar 30%) or provide separate rows.

Step-by-step: Build an unambiguous project rubric from the evidence package

- Step 1: List the artifacts and their required components.

- Step 2: Decide which components are “completeness” (binary) and which are “quality” (levels).

- Step 3: Create rubric criteria that map to quality components only (avoid scoring completeness twice).

- Step 4: Write level descriptors using observable indicators (counts, presence/absence, accuracy checks, alignment to constraints).

- Step 5: Add a “common pitfalls” note for each criterion to reduce misinterpretation.

- Step 6: Pilot the rubric on two sample submissions (one strong, one weak) and revise unclear language.

AI prompt: Generate rubric criteria that map to artifacts

Create a 4-level analytic rubric for this performance task. Inputs: (A) Evidence package deliverables: [paste]. (B) Non-negotiable constraints: [paste]. Output a table-like format with: Criterion name, What to look for (observable indicators), Level 4/3/2/1 descriptors. Rules: (1) Each criterion must map to a specific artifact feature; (2) Avoid vague words (good, strong, clear) unless defined; (3) Do not combine unrelated skills in one criterion; (4) Include a “Common pitfalls” line per criterion.When you review the rubric draft, look for adjectives without anchors (insightful, compelling, thorough). Replace them with evidence statements. For example, “thorough” can become “addresses all required sub-questions and includes at least one counterpoint.” “Compelling” can become “uses at least one relevant statistic and explains why it matters to the audience.”

Use “Boundary Examples” to Remove Hidden Expectations

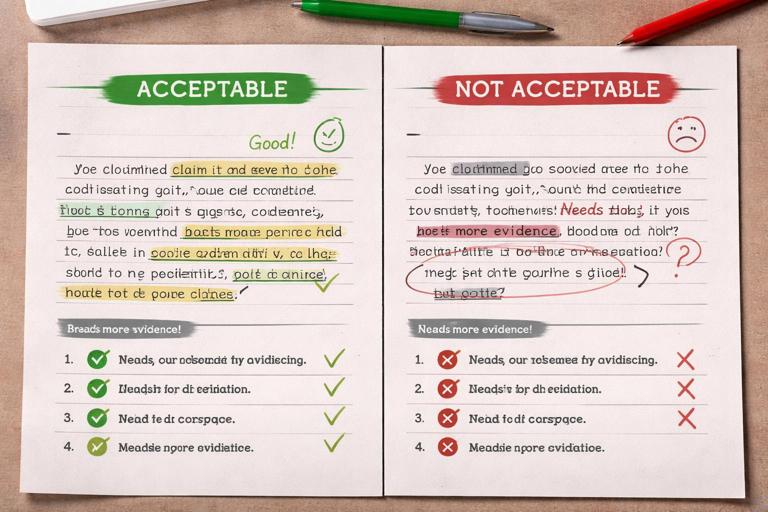

Students often miss expectations that teachers assume are obvious, such as what counts as a credible source, what a “claim” looks like, or how much explanation is enough. Boundary examples clarify the line between levels. These are not full exemplars; they are short snippets that show what meets versus almost meets a criterion. For instance, you can provide two versions of a claim-evidence statement: one that is acceptable and one that is not, with a brief annotation.

AI is useful for generating boundary examples quickly, but you should ensure they match your subject and grade level. The key is to tie each example directly to a rubric criterion and label the exact feature that changes the score.

AI prompt: Create boundary examples for each rubric criterion

For each rubric criterion below, generate two short boundary examples: (1) a Level 3 example that just meets the descriptor, and (2) a Level 2 example that almost meets it but falls short. Keep each example under 80 words (or equivalent snippet). Add a one-sentence annotation explaining the difference using the rubric’s observable indicators. Rubric: [paste rubric]. Context/topic: [paste topic].Use boundary examples during project launch and again during revision checkpoints. They reduce “I didn’t know you wanted that” because they make the invisible visible.

Separate Completeness, Quality, and Process (So Scores Are Defensible)

Many disputes about project grading come from mixing three different things: completeness (did they submit required parts), quality (how well the parts meet criteria), and process (how they worked). To reduce ambiguity, decide where each belongs. Completeness is best handled with a submission checklist (often ungraded or low-stakes). Quality belongs in the rubric. Process can be assessed separately with a brief process rubric or a set of required logs, but it should not silently influence the product score unless you state it explicitly.

If you want to include process, define the evidence: planning document, revision history, peer feedback notes, or a reflection with specific prompts. Then score process with its own criteria such as “Revision evidence: at least two meaningful changes linked to feedback.” Avoid grading “effort” unless you define it as observable behaviors and artifacts.

AI prompt: Create a separate process rubric and evidence list

Design a simple process assessment for this project that reduces ambiguity. Output: (1) Process evidence students must submit (max 3 items); (2) A 3-level rubric for process only, with observable indicators; (3) A note explaining how process score is weighted relative to product score. Project summary: [paste]. Product rubric: [paste].Make Rubric Language Consistent Across Criteria

Rubrics become ambiguous when each row uses different logic. One row might use counts (“3 sources”), another uses quality adjectives (“strong reasoning”), and another uses time (“on time”). Consistency helps students understand what “Level 3” generally means. You can standardize descriptors by using the same pattern in each level, such as: accuracy, completeness of reasoning, and alignment to constraints.

A practical technique is to create a “descriptor template” and apply it to each criterion. For example: Level 4 = meets all requirements plus adds a justified enhancement; Level 3 = meets all requirements; Level 2 = meets some requirements with notable gaps; Level 1 = minimal evidence. Then specify what “requirements” means for that criterion using observable indicators.

AI prompt: Normalize rubric descriptors for consistency

Revise this rubric to make level descriptors consistent across criteria while keeping the same meaning. Use a shared pattern for Level 4/3/2/1 (e.g., meets all indicators; meets most; meets some; meets few). Replace vague adjectives with observable indicators. Keep criteria unchanged unless a criterion is double-barreled; if so, split it into two criteria and explain why. Rubric: [paste].After normalization, check that each criterion still distinguishes levels. If Level 3 and Level 4 sound identical, add a specific “enhancement” indicator for Level 4 that is optional but observable, such as “includes a limitation or counterargument and responds to it.”

Prevent AI-Related Ambiguity: What Is Allowed, What Must Be Disclosed

Projects now often involve AI tools, which can introduce a new kind of ambiguity: students may not know what assistance is permitted. If you allow AI for brainstorming but not for final writing, say so. If you allow AI for grammar checking, specify whether students must disclose it. Ambiguity here leads to inconsistent enforcement and student anxiety.

Write an “AI use clause” that includes: permitted uses, prohibited uses, disclosure requirements, and consequences for non-disclosure. Keep it short and concrete. Tie it to the evidence package by requiring a brief tool-use note, such as “List any AI tools used and what you used them for (one sentence each).”

AI prompt: Draft an AI use clause aligned to a project

Draft a student-facing AI use clause for this project. Include: Allowed uses (3–5 bullets), Not allowed (3–5 bullets), Disclosure requirement (exactly what to write and where to submit it), and a fairness note. Keep it clear and non-punitive. Project deliverables: [paste].Quality Assurance: Stress-Test the Task and Rubric Before Assigning

Even well-written tasks can hide ambiguity until you test them. A fast stress test is to ask: “Could a student meet every requirement and still produce weak learning evidence?” If yes, your rubric may be scoring surface features rather than thinking. Another test: “Could a student produce excellent work but lose points because of an unclear requirement?” If yes, your directions or rubric descriptors need tightening.

You can also run a “two-reader test”: imagine two teachers scoring the same submission. Where would they disagree? Those are the criteria that need more observable indicators or boundary examples. AI can help by acting as a second reader and pointing out where interpretation could vary.

Step-by-step: Stress-test with AI as a second reader

- Step 1: Provide the task directions, evidence package, and rubric.

- Step 2: Provide a short sample student submission (real or teacher-created).

- Step 3: Ask AI to score it with citations to exact rubric language and to flag any ambiguous rubric lines.

- Step 4: Revise the rubric where the AI’s justification feels forced or where multiple scores seem plausible.

AI prompt: Score a sample and flag ambiguity

Act as a second scorer. Score this sample submission using the rubric. For each criterion: assign a level, quote the exact rubric descriptor that matches, and cite the exact part of the student work that supports the score. Then list any rubric lines that were hard to interpret or could reasonably lead to a different score, and propose a clearer rewrite. Task + rubric: [paste]. Sample submission: [paste].When you revise, prioritize clarity over elegance. Short, literal descriptors beat poetic ones. If a criterion is still hard to score, add one more observable indicator or split the criterion into two.

Practical Templates You Can Reuse

To make this work sustainable, keep a set of reusable templates: an evidence package template, a checklist template, a product rubric template, a process rubric template, and a boundary example template. The power move is to prompt AI to fill the templates rather than asking it to “make a rubric,” because templates force specificity and reduce drift.

Template: Evidence package (copy/paste)

Project name (student-facing): ___ Audience: ___ Purpose: ___ Time available: ___ Collaboration: ___ Tools allowed: ___ Tools not allowed: ___ Sources/citations: ___ Submission format: ___ Deliverables: 1) ___ (format/length/components) 2) ___ 3) ___ Completeness checklist (yes/no): ___Template: Rubric row with observable indicators

Criterion: ___ What to look for (observable indicators): - ___ - ___ - ___ Level 4: ___ Level 3: ___ Level 2: ___ Level 1: ___ Common pitfalls: ___AI prompt: Fill the templates for a new project

Fill in these two templates for the following project. Keep everything unambiguous and observable. Project description: [paste]. Grade/age: [paste]. Constraints: [paste]. Templates: [paste the Evidence package template and Rubric row template].As you build a library of these project “contracts,” you will notice that ambiguity drops fastest when you consistently define deliverables, use checkable requirements, and write rubric descriptors that point to visible evidence in student work.