Why governance matters in a modular brand system

Once a brand system is modular and distributed as reusable assets, the main risk shifts from “we don’t have standards” to “standards exist, but teams interpret them differently.” Governance is the operating model that keeps the system coherent while allowing teams to move fast. It defines who can decide, how decisions are made, how changes are introduced, and how compliance is verified across products, regions, and vendors.

Consistency enforcement is not the same as policing. In a healthy governance model, enforcement is mostly automatic (through tooling, templates, and guardrails), occasionally procedural (reviews and approvals), and rarely punitive (escalations only for high-risk issues). The goal is to reduce cognitive load for creators and reduce brand risk for the organization.

Core governance concepts (without rehashing design specs)

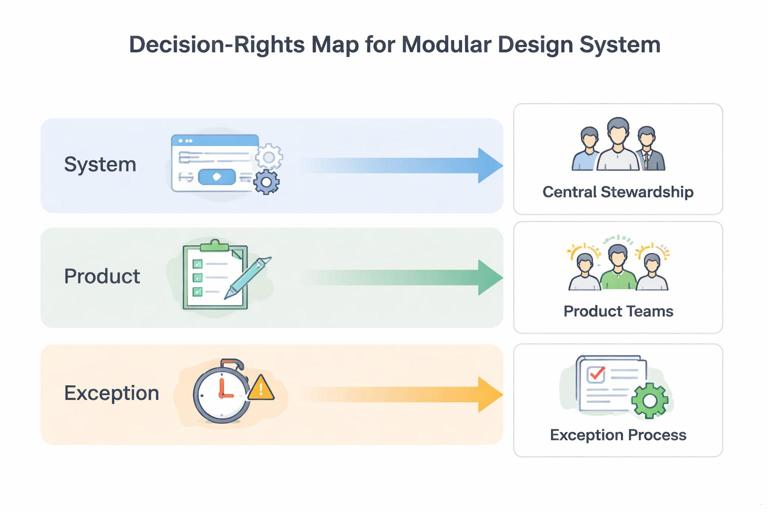

Decision rights: who gets to decide what

Governance starts by separating decisions into categories and assigning clear owners. Without explicit decision rights, teams either block each other (too many approvers) or diverge (everyone ships their own variant).

- System decisions: changes that affect shared foundations or cross-channel behavior (e.g., introducing a new component family, deprecating a pattern, altering accessibility requirements). These require centralized stewardship.

- Product decisions: choices within allowed constraints for a specific product or campaign (e.g., selecting from approved components, composing layouts, choosing imagery within guidelines). These should be owned by product teams.

- Exception decisions: temporary deviations or experimental variants that may later be adopted. These require a defined exception process with time limits and measurement.

Single source of truth (SSOT) vs. local autonomy

In modular systems, the SSOT is not a PDF; it is the combination of canonical assets, component definitions, and release notes that teams can integrate. Governance defines what must be centralized (to prevent fragmentation) and what can be localized (to support speed and market needs). A practical rule: centralize anything that would create incompatibility if duplicated, and localize anything that can vary without breaking recognition or user experience.

Change management as a product practice

Treat the brand system as a product with users (designers, engineers, marketers, agencies). Governance should include intake, prioritization, roadmapping, releases, and support. Consistency enforcement becomes easier when teams trust that the system evolves predictably and responds to real needs.

- Listen to the audio with the screen off.

- Earn a certificate upon completion.

- Over 5000 courses for you to explore!

Download the app

Common governance models (and when to use them)

Centralized model (Brand/DesignOps as gatekeeper)

A central team owns standards, approves changes, and often reviews implementations. This works best when brand risk is high (regulated industries, high-visibility consumer brands) or when the organization is early in maturity and needs strong alignment.

- Pros: high consistency, clear accountability, fewer parallel variants.

- Cons: bottlenecks, slower experimentation, central team becomes overloaded.

Federated model (hub-and-spoke)

A central “hub” owns the core system, while “spokes” (product or regional teams) own extensions within defined boundaries. Spokes contribute back through a controlled contribution process.

- Pros: scales across many teams, balances speed and consistency, encourages shared ownership.

- Cons: requires strong processes and tooling; without them, extensions become forks.

Distributed model (standards by contract)

Teams operate with high autonomy and align through contracts: shared interfaces, automated checks, and agreed release processes. This is common in engineering-heavy organizations with mature CI/CD and strong platform practices.

- Pros: fast, resilient, minimal central bottlenecks.

- Cons: requires high discipline; brand drift can occur if contracts are weak or not enforced.

Hybrid model (most common in practice)

Many organizations use a hybrid: centralized governance for high-risk surfaces (homepage, top-level navigation, core UI library, flagship campaigns) and federated governance for lower-risk surfaces (regional landing pages, internal tools, experimental features). The key is to define the boundary explicitly so teams know when they are in “strict mode” vs. “flex mode.”

Governance roles and responsibilities

System Owner (Brand System Lead)

Accountable for coherence and roadmap. Owns decision rights for system-level changes, chairs governance meetings, and ensures releases and deprecations are managed.

Maintainers (Design + Engineering)

Maintain the canonical libraries, implement updates, manage backlog, and ensure quality. In a federated model, maintainers also mentor contributors and review submissions.

Stewards/Champions (embedded in teams)

Represent the system within product or regional teams. They help teams adopt updates, surface needs, and prevent local workarounds from becoming permanent drift.

Approvers for risk domains

Some changes require domain approval: legal, accessibility, security, localization, or marketing compliance. Governance should specify when these approvers are required, and provide pre-approved patterns to avoid repeated reviews.

Vendors and agencies

External partners need explicit onboarding, access to canonical assets, and a defined review path. Governance should treat vendors as system users with limited permissions and clear deliverable requirements.

Consistency enforcement mechanisms (from soft to hard)

1) Guardrails by default (templates, starter kits, locked primitives)

The most effective enforcement is prevention: make the correct path the easiest path. Examples include pre-built templates for common deliverables, component-based page builders, and locked base styles in shared libraries. Teams should be able to assemble outputs without inventing new variants.

2) Automated checks (linting, visual regression, accessibility gates)

Automated enforcement reduces subjective debates. Typical checks include:

- Design linting: detects off-system colors, incorrect component usage, missing states, or spacing anomalies in design files (where tooling supports it).

- Code linting: enforces usage of approved components and prevents hard-coded values that bypass the system.

- Visual regression tests: catch unintended changes to shared components and key screens.

- Accessibility gates: block releases that fail defined thresholds (contrast, focus order, keyboard support).

Governance defines which checks are mandatory for which surfaces (e.g., “Tier 1 experiences require all checks; Tier 3 internal tools require only accessibility gates”).

3) Review rituals (lightweight, time-boxed)

Human review is still necessary for nuance: brand expression, tone, and edge cases. To avoid bottlenecks, governance should standardize review formats:

- Office hours: weekly drop-in sessions for quick alignment.

- Design crit with system lens: focuses on correct component usage and consistency risks.

- Pre-launch brand check: only for Tier 1 surfaces; time-boxed with a checklist.

4) Approvals and exceptions (rare, documented)

Approvals should be the exception, not the default. When approvals are required, define a clear SLA (e.g., 2 business days) and a checklist to reduce back-and-forth. Exceptions should be logged, time-bound, and revisited.

Step-by-step: setting up a governance model that scales

Step 1: Segment your ecosystem by risk and visibility

Create a simple tiering model that determines how strict enforcement must be. Example:

- Tier 1: flagship marketing pages, homepage, core product flows, app shell, major campaigns.

- Tier 2: secondary product areas, regional marketing pages, partner co-marketing.

- Tier 3: internal tools, prototypes, low-visibility utilities.

For each tier, define required enforcement: automated checks, review steps, and approval needs. This prevents over-governing low-risk work and under-governing high-risk work.

Step 2: Define decision rights with a RACI matrix

Use a RACI (Responsible, Accountable, Consulted, Informed) for common decision types. Keep it short and operational. Example decision types:

- Adding a new shared component

- Deprecating an existing component

- Introducing a new campaign layout template

- Allowing a regional variation

- Granting an exception for a partner requirement

Publish the RACI where teams work (issue tracker, system portal). Governance fails when people have to guess who to ask.

Step 3: Create an intake and triage pipeline

Set up a single intake channel for system requests (issue tracker form or service desk). Require structured inputs so maintainers can evaluate quickly:

- Problem statement and context

- Who is blocked and how many teams are affected

- Tier impacted (1/2/3)

- Deadline and business impact

- Proposed solution (optional)

- Screenshots or examples

Triage weekly with a small group (system owner + maintainer + representative champion). Outcomes should be explicit: accept, defer, redirect to existing solution, or treat as exception.

Step 4: Establish contribution rules (especially in federated models)

If teams can contribute, define a contribution contract. A practical contribution checklist might include:

- Meets accessibility requirements and includes states

- Includes usage guidance and edge cases

- Includes implementation notes for engineering

- Includes test coverage expectations (unit/visual)

- Includes migration notes if it replaces something

Require contributors to provide a reference implementation or a sandbox example. This reduces “design-only” contributions that later break in production.

Step 5: Implement release management and deprecation policy

Consistency depends on predictable releases. Governance should define:

- Release cadence: e.g., monthly minor releases, quarterly major releases.

- Versioning rules: what counts as breaking vs. non-breaking for consumers.

- Deprecation windows: e.g., 90 days to migrate after deprecation notice for Tier 1 products.

- Migration support: guides, codemods where possible, and office hours.

Publish release notes that are actionable: what changed, who is impacted, what to do, and by when.

Step 6: Define enforcement checkpoints in the delivery lifecycle

Map governance to existing workflows rather than adding parallel processes. Example checkpoints:

- Design kickoff: confirm tier and required checks.

- Midpoint review: validate system usage before too much custom work accumulates.

- Pre-merge: automated linting and tests required.

- Pre-launch: Tier 1 brand check using a checklist.

Each checkpoint should have a clear pass/fail criterion and an owner. Avoid vague “looks on brand” gates; use checklists and references.

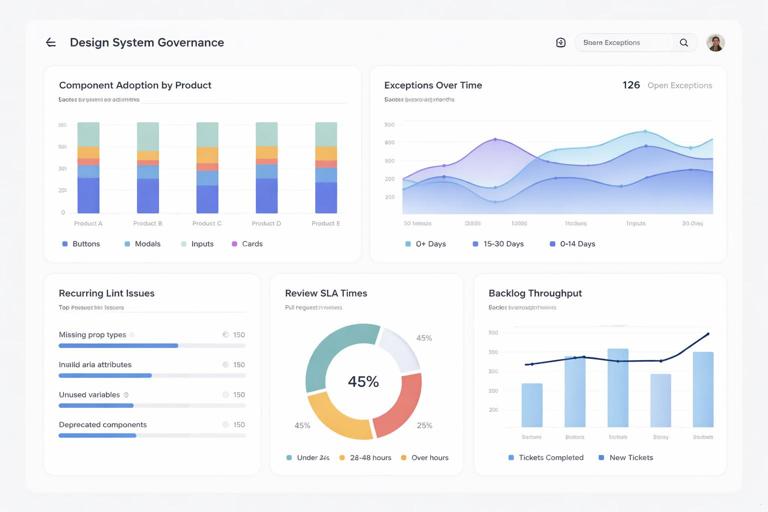

Step 7: Build a compliance dashboard (measure drift)

You cannot govern what you cannot see. Create a lightweight scorecard that tracks:

- Adoption rate of shared components (by product)

- Number of exceptions granted and their age

- Top recurring issues from linting/tests

- Time-to-approve for Tier 1 reviews

- Backlog health (intake volume vs. throughput)

Use the dashboard to drive improvements: if teams frequently request the same exception, the system may need an extension or clearer guidance.

Practical examples of governance in action

Example 1: Regional team needs a localized promotional module

Scenario: A regional marketing team needs a promotional module that supports longer headlines and right-to-left layouts.

Governance flow:

- Team submits intake with examples and deadline.

- Triage classifies as Tier 2 and identifies cross-team value (localization support).

- Decision: create an extension module owned by the regional team but reviewed by maintainers.

- Contribution checklist requires RTL testing and accessibility validation.

- Release as an “extension” package; document constraints and when it can be used.

Consistency enforcement: automated checks ensure the module uses approved components; pre-launch review required only for the campaign landing page, not every localized variant.

Example 2: Product team hard-codes styles to meet a deadline

Scenario: A product team bypasses shared components to ship quickly, introducing subtle inconsistencies.

Governance response:

- Code linting flags hard-coded values in Tier 1 surfaces and blocks merge.

- Team requests an exception due to deadline.

- Exception is granted for a limited scope with a 30-day remediation ticket created automatically.

- Champion works with maintainers to identify missing capability in the system that caused the workaround.

Consistency enforcement: the system prevents silent drift while still allowing a controlled, time-bound deviation.

Example 3: Agency delivers campaign assets that don’t match the system

Scenario: An agency delivers a campaign with custom UI elements and inconsistent spacing.

Governance flow:

- Agency onboarding includes a “deliverables contract” specifying required templates and component usage.

- Pre-production checkpoint: agency submits a small set of key frames for approval.

- System owner provides annotated feedback using a checklist.

- Final delivery is validated against the checklist before handoff.

Consistency enforcement: governance shifts review earlier (cheaper) and uses standardized checklists to avoid subjective debates late in production.

Checklists you can operationalize immediately

Tier 1 pre-launch brand consistency checklist

- All UI built from approved components; no one-off variants without an exception ID

- All interactive states present (hover, focus, disabled, error) where applicable

- Accessibility gates pass (contrast, keyboard navigation, screen reader labels)

- Localization and truncation tested for key languages

- Imagery and messaging approvals completed (if required by policy)

- Visual regression tests updated and reviewed for intentional changes

Exception request checklist

- What requirement cannot be met with existing system assets?

- Is this a temporary workaround or a candidate for system evolution?

- Scope: which screens/pages and which tier?

- Risk assessment: brand, accessibility, legal, performance

- Expiration date and remediation owner

- Link to tracking ticket and approval record

Meeting cadence and artifacts that keep governance lightweight

Recommended cadence

- Weekly triage (30–45 min): intake review, prioritize, assign owners.

- Biweekly maintainer sync (45–60 min): implementation progress, upcoming releases, deprecations.

- Monthly champion forum (45–60 min): adoption issues, training needs, cross-team alignment.

- Quarterly governance review (60–90 min): metrics, policy updates, tier definitions, roadmap alignment.

Artifacts

- Governance charter (decision rights, tiers, SLAs)

- Public backlog and roadmap (what’s coming, what’s deferred)

- Release notes and migration guides

- Exception log with expiration dates

- Compliance dashboard

Implementation patterns for enforcement in code and workflow

Policy-as-code mindset

Where possible, convert governance rules into automated checks. Examples:

- Block merges when non-approved UI packages are used in Tier 1 repositories.

- Require visual regression approval for changes to shared components.

- Require accessibility test results attached to release candidates.

This reduces reliance on manual review and makes enforcement consistent across teams and time.

“Golden paths” for common work

Define golden paths: pre-approved ways to build common experiences (e.g., marketing landing page, product onboarding flow, email template set). Governance maintains these paths, and teams are encouraged to start from them. Enforcement becomes simpler because deviations are obvious and intentional.

Escalation ladder (rarely used, but necessary)

When teams repeatedly bypass the system, governance needs a clear escalation ladder:

- Champion coaching and support

- Maintainer intervention to remove blockers (missing component, unclear guidance)

- System owner review for repeated exceptions

- Leadership escalation only for unresolved Tier 1 risks

The ladder should focus on removing root causes first, not punishment.